LASA

Current highlights

Robotics at EPFL is an interfaculty initiative that connects and promotes EPFL’s advanced expertise

in robotics and autonomous systems. Check it out!

LASA teamed up with MIT press to publish a textbook presenting a wealth of machine learning techniques to make robot control more flexible and safer when interacting with humans.

Research at LASA

Current project grants

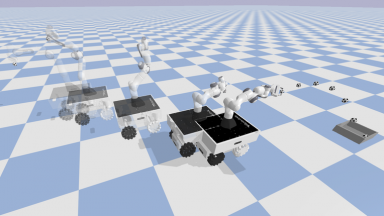

Robetarme

Human-robot collaborative construction system for shotcrete

SAHR

ERC Advanced Grant: Skill acquisition in humans and robots (SAHR)

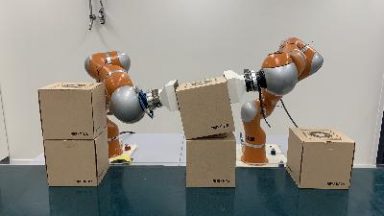

Hasler Stiftung

Four-arms manipulation and applications to surgery

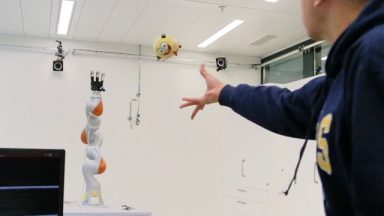

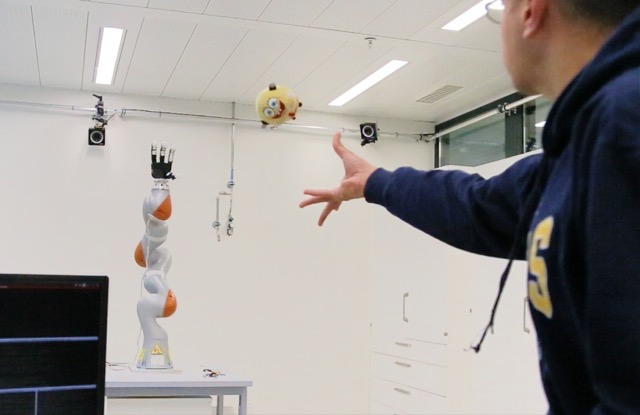

EU I.AM Project

From pick-and-place to grab-and-toss

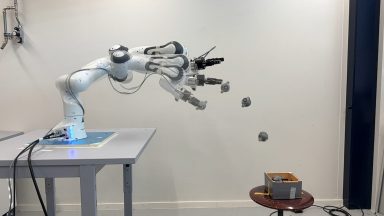

EU DARKO

Dynamic agile robots that learn and optimize operations

Microsurgery

Qualitative assessment of skills and skill acquisition in microsurgery

Past project grants

EU Crowdbot project

Safe navigation of robot in crowd

CHISTERA CORSMAL

Estimate the physical properties of objects for safe handover from / to humans

Swiss SNF – NCCR

Robotics National Center of Competence in Research

Secondhands

Creating a proactive robot assistant for maintenance tecnicians

EU CogIMon

A EU-funded research project for Cognitive Interactive Motion

Alterego

An EU funded project to work on humanoid robotics and virtual reality to improve social interactions